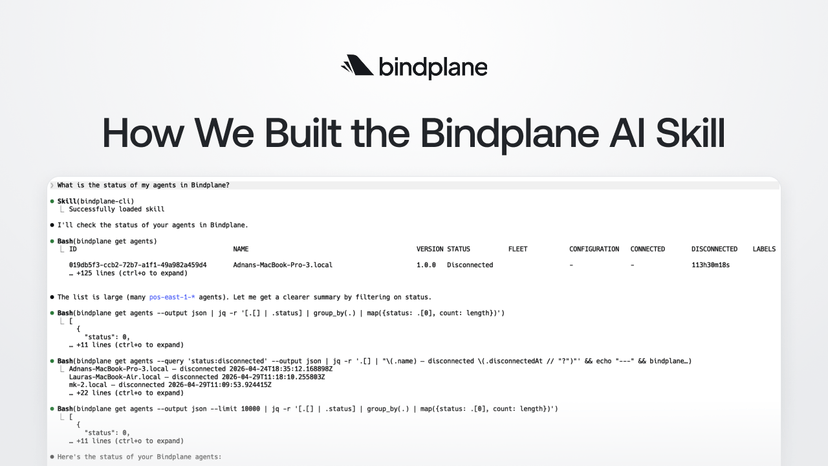

Faster OpenTelemetry Migrations from Splunk to SecOps with Bindplane

Migrating from Splunk to Google SecOps doesn't have to be a cutover — with Bindplane, you can run both in parallel, shift workloads one source at a time, and decommission Splunk only when you're ready.

Many security teams are looking to move off Splunk, whether to reduce licensing costs, consolidate their SIEM, or take advantage of Google SecOps' built-in threat intelligence and YARA-L detection capabilities. But migrations aren’t easy, and no one wants to run blind while they evaluate and move to a new platform. With OpenTelemetry and Bindplane, you can easily make the switch to SecOps without impacting your existing stack.

If you already have telemetry pipelines shipping logs to Splunk, this post walks through how to add SecOps alongside those existing destinations using Bindplane. No rip-and-replace required. You can start routing data to SecOps today, evaluate it against what you're already using, and migrate at your own pace.

We'll cover three things: adding SecOps as an additional destination to an existing pipeline, using routing logic to control what data goes where, and using Bindplane's processor capabilities to format and enrich data before it reaches SecOps.

Why migrate to SecOps?

Security teams typically look for three things in their SIEM: better economics as data volumes grow, stronger out-of-the-box detection coverage, and less operational overhead. Most platforms make it hard to have all three at once – licensing costs that scale with volume create pressure to drop data, detection coverage requires significant custom rule development, and managing multiple tools across agents, formats, and integrations adds up.

Google SecOps scales to ingest, analyze, and search petabytes of data, and offers 12 months of fully searchable retention by default -- without the cost pressure that comes with volume-based licensing. Threat intelligence is operationalized directly in the platform, and detections are developed and continuously maintained by Google's threat research team, so you're not starting from scratch on coverage. Analysts can search data and create detections using natural language, which reduces the ramp time for teams migrating from Splunk SPL.

But here's the thing: nobody wants to rip out their entire security stack overnight. You need to run SecOps alongside what you already have, prove the value, and transition gradually. That's exactly what Bindplane enables.

Prerequisites

Before getting started, you'll need:

- A running Bindplane instance (cloud or self-hosted) with at least one collector installed and reporting data. If you're new to Bindplane, check out our Getting Started Guide.

- A SecOps instance (cloud or self-hosted). Learn more about SecOps here.

- An existing telemetry pipeline in Bindplane sending data to your current SIEM. This is the pipeline you'll be adding SecOps alongside.

Step 1: Add SecOps as an additional destination

The simplest way to start evaluating SecOps is to send a copy of the telemetry you're already collecting. Bindplane supports multiple destinations per configuration, so you can add SecOps alongside your current backend, verify data is flowing correctly, then start shifting workloads.

In Bindplane, open the configuration you want to modify and click "(+) Destination." Select SecOps from the destination list. Bindplane has a native SecOps destination type, so there's no need to configure a generic OTLP exporter manually.

Configure the SecOps destination with your Chronicle customer ID and service account credentials. You can find your customer ID under Settings > Profile > Organization Details in the SecOps interface, and download your credentials file from Settings > Collection Agents > Ingestion Authentication File.

After saving the destination, you'll see it appear in the topology view, but it won't be receiving data yet. The new destination shows up disconnected from your pipeline, as in the screenshot below.

To start sending data to SecOps, you need to connect it to your pipeline. Hover over the processor node on the source side of your pipeline and click the + button that appears, then click the processor node on the SecOps destination. This draws a line between the two, routing telemetry to that destination.

One thing to keep in mind: Google SecOps expects raw, unparsed logs so its own parsers can process them correctly. If your sources support sending raw data (typically indicated by “Include Log Record Original” in the Advanced menu), make sure that option is enabled before routing to SecOps.

Before rolling out, add the Google SecOps Standardization processor right before the SecOps destination. This processor lets you configure the log type, namespace, and ingestion labels for logs, which is how SecOps knows which parser to apply to your data. It's best practice to always explicitly set this when sending logs to Google SecOps. If you're not sure which log type to use, Pipeline Intelligence can automatically identify log types from your snapshot data and generate a SecOps Standardization processor configuration for you.

This is a good opportunity to add any additional processors that shape your data for SecOps before rolling out. You can add destination-level processors on the SecOps path to filter, enrich, or transform data independently of what gets sent to Splunk.

Click Start Rollout to deploy the updated configuration to your collectors. You can use progressive rollouts to deploy to a subset of collectors first and verify data is arriving in SecOps before rolling out to everyone. Once the rollout completes, telemetry will begin flowing to both destinations simultaneously. You can verify data is arriving in SecOps by searching for recent logs or UDM events.

At this point, you're running a dual-write setup. Every log your collectors gather is being sent to both Splunk and SecOps. This is a safe way to validate SecOps's performance and query speed without affecting your production security workflows.

Step 2: Route specific data to SecOps

Running everything in parallel is a great starting point, but most teams don't want to send 100% of their data to every destination indefinitely. The next step in any migration is to start shifting specific workloads to SecOps while keeping Splunk running for everything else.

Bindplane gives you fine-grained control over what goes where using routing connectors and destination-level processors. Here are two patterns that come up in most migrations.

Example: Migrate log sources one at a time

Once you've comfortably validated SecOps in parallel, the next step is to start shifting workloads off Splunk. A standard approach is to do this log source by log source, not all at once. Pick a source, validate its detections and dashboards in SecOps, then stop sending that source's data to Splunk. Rinse and repeat.

Say you have a gateway collecting logs from multiple sources – firewalls, endpoints, and Windows events – all going to Splunk. You want to migrate your Palo Alto logs to SecOps first because your network security team has already validated their detection rules in SecOps and is ready to cut over.

Add a Filter by Condition processor on your Splunk destination, configured to exclude logs where the log_type attribute (or whichever resource attribute identifies the log type) matches the one you're migrating. Those logs will stop going to Splunk but will continue flowing to SecOps.

Once the team has confirmed their Palo logs are landing correctly in SecOps, their detections are firing as expected, and their dashboards are working, you repeat the process for the next log source. Each time, add another exclusion on the Splunk side. When all sources have been migrated, disconnect the Splunk destination entirely. Starting with lower-risk sources first gives your team a chance to build confidence before migrating business-critical data.

Example: Pilot SecOps with a specific region or business unit

Rather than migrating sources globally all at once, another approach is to pilot SecOps with a specific region or business unit first. This lets a subset of your team validate SecOps in a real environment while the rest of the organization stays on Splunk.

A routing connector makes this clean. Route logs from your pilot region exclusively to SecOps, while everything else continues flowing to Splunk. Once the pilot team has validated their detections, dashboards, and workflows in SecOps, you can update the routing connector to bring additional regions or business units across. Eventually, when all groups have migrated, you remove Splunk entirely.

As you rebuild alerts and dashboards in SecOps and gain confidence with each migrated region, you can update the routing connector to shift your remaining logs to SecOps too, eventually removing Splunk entirely.

As the migration progresses, these two patterns cover most of what you'll need. Filters let you gradually peel sources off your legacy destination while SecOps receives everything. The routing connector lets you make clean splits when you want to run a controlled pilot before committing to a broader rollout. Both approaches keep your existing stack operational throughout the process.

Step 3: Process data for SecOps

Because SecOps expects raw, unparsed logs, the processing you apply on the SecOps path is different from what you might do for other destinations. Rather than parsing or normalizing log structure, the focus is on controlling what reaches SecOps and how it's labeled.

Processors on the SecOps path can handle things like dropping fields that contain sensitive data before it leaves your environment, redacting PII, or filtering out log types that don't need to be retained in SecOps. These run independently of whatever processing you've configured for Splunk, so you can tailor each destination path without affecting the other.

Putting it all together

The overall arc is straightforward: start with parallel ingestion, shift log types one at a time as your team validates each one in SecOps, and eventually decommission Splunk. Most teams can get through the full migration in a matter of weeks, not months, and Bindplane handles the rollouts centrally so you're never hand-editing collector configs along the way.

What's next

Bindplane supports more than 140 sources and destinations out of the box, and SecOps is a first-class integration. Whatever you're migrating from, Bindplane has a native integration.

The security landscape is shifting toward open standards and cloud-native platforms. SecOps and Bindplane together make it possible to join that shift without disrupting the systems your team relies on today. Start with a single pipeline, send some data, and see what SecOps can do.

- Get started with SecOps: cloud.google.com/security/products/security-operations

- Get started with Bindplane: app.bindplane.com

- Bindplane + SecOps docs: SecOps integration guide