Contributing Distributed Partition Ownership to the Azure Event Hub Receiver

If you're running OpenTelemetry collectors against Azure Event Hubs, your fleet now self-organizes, failover is automatic, and restarts don't lose data.

If you're running OpenTelemetry collectors against Azure Event Hubs, distributed partition ownership and checkpointing just got significantly better.

Your fleet now self-organizes. Failover is automatic. Restarts don't lose data. Here's how we got here.

Distributed Partition Ownership: The Operational Model That Should Have Always Existed

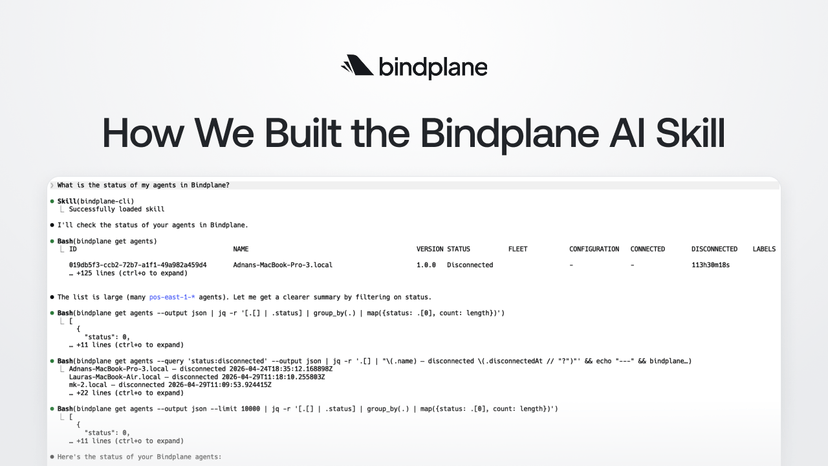

Before this latest release of the Azure Event Hub receiver, managing collectors using the Azure Event Hub receiver meant manually assigning a partition to each collector. Ten partitions meant ten explicit assignments. A collector goes down? That partition goes unread until someone notices.

We donated a new Azure SDK implementation and distributed partition ownership to the OpenTelemetry Collector in March 2026.

Connect an Azure Blob Storage account as a coordination layer. When a collector starts, it checks blob storage, finds an unclaimed partition, and claims it. When a collector goes down, another one takes over automatically. Load balancing is automatic. Failover is automatic.

The recommended setup is simple:

- Match the number of partitions to the number of collectors.

- Deploy additional collectors for redundancy. Standby collectors will automatically take over a partition when one becomes available.

For configuration details, see the Bindplane docs, here, or the OpenTelemetry Collector docs, here.

Checkpointing: Restarts Don't Have to Mean Data Loss

Distributed ownership only works if handoffs are clean. Checkpointing is what makes that possible.

Every collector continuously writes its position to blob storage. For each partition it owns, it will record the last successfully read event. When a collector restarts, or another takes over, it reads that checkpoint and picks up exactly where the previous one left off. Meaning, you get reliability with no duplicates.

We rely on the same pattern in our Kafka receiver. It's a baseline requirement for any receiver where data reliability actually matters.

Why This Took Six Months to Contribute Upstream: The SDK Migration

These features didn't arrive in isolation. Getting here meant refactoring the foundation.

In September 2025, a Bindplane customer reported that the Azure Event Hub receiver was dropping data. The receiver was originally built using the now-deprecated Azure Event Hubs Go SDK. The first step to modernizing the receiver was updating it to use the new Azure SDK for Go. One of the primary challenges was that the old SDK used a streaming model, while the new SDK used polling. To support a safe migration path, the receiver was refactored around a shared internal interface that both SDK implementations could satisfy, allowing the legacy streaming implementation and the new polling implementation to run side by side and be swapped back and forth during testing and rollout.

Our constraint was strict. Both SDKs had to exist during testing and performance evaluations before we could flip the default. The migration landed in October 2025 behind an alpha flag, followed by four months of bug fixes and stability hardening. In February 2026, we promoted the receiver to beta and dropped the old SDK.

Why We Went Upstream

When we found the problem, the easier path was obvious. We could easily build a new receiver into the Bindplane Distribution of the OpenTelemetry (BDOT) Collector, and ship it specifically for our own customer needs. We didn't take it. We wanted to donate it back to the OpenTelemetry community.

The community was already relying on this receiver. Solving the issue only in BDOT would have fixed it for our customers while leaving the broader community behind. We did not want that. Instead, we worked upstream and contributed the migration incrementally to preserve backwards compatibility. Along the way, the maintainers offered me code ownership of the receiver, which helped streamline reviews and accelerate the migration effort.

Every decision since has been made with the broader community in mind: backwards compatibility, clean rollback paths, thorough testing, and documentation that would hold up to long-term maintenance. It took longer, sure, but I’m confident it was the right call.

Drop the Kafka Workaround

For a while, we recommended the Kafka receiver to teams that needed reliable Azure Event Hub ingestion at scale, not because it was the right tool, but because the Azure receiver simply wasn’t ready. Azure Event Hub’s Kafka compatibility mode served as a practical bridge, and the Kafka receiver provided the reliability features the Azure implementation lacked, including checkpointing and distributed ownership.

That’s no longer the case. The Azure Event Hub receiver now includes the same reliability guarantees that previously made us reach for Kafka. We now officially recommend using the native Azure Event Hub receiver.

The Full Story of Becoming a Code Owner and Contributing to OpenTelemetry

What YOU Should Do Now

The receiver is now production-ready. We have fixed the data loss issues, automated partition management, and built in reliable failover. With this in place, Kafka compatibility mode is no longer necessary. We now recommend moving your pipeline to use the Azure Event Hub receiver.

If you want all of this without managing collector configuration by hand, try Bindplane. You can also reach out to the Bindplane team in the Community Slack if you need help getting started.