OpAMP for OpenTelemetry: Managing Collector Fleets and Introducing the New OpAMP Gateway Extension

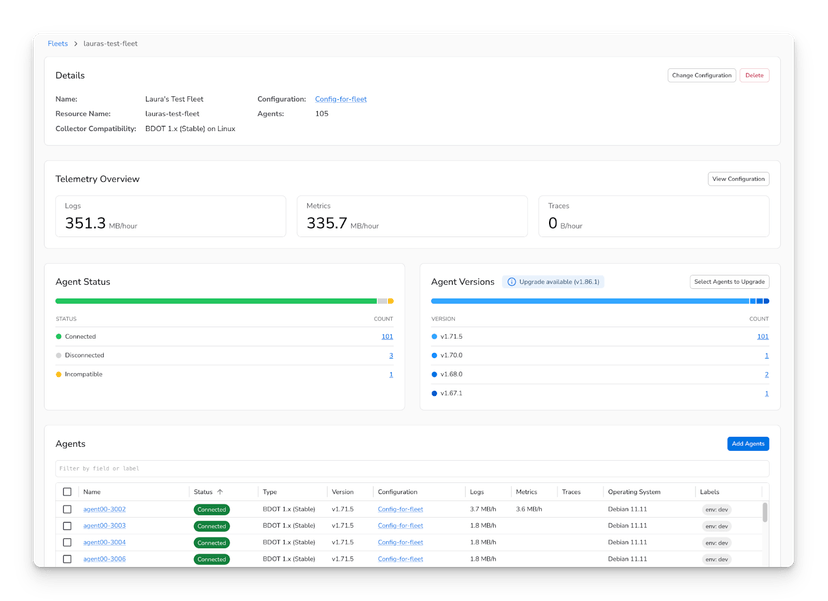

Today, Bindplane is launching the OpAMP Gateway Extension in alpha — a new component that extends OpAMP fleet management into network-segmented and firewalled environments where direct agent-to-server connectivity is not possible. It also addresses fleet scaling by fanning many agent connections into a small upstream pool, reducing connection load on the OpAMP server.

We also hope to donate the OpAMP Gateway Extension upstream to the OpenTelemetry project and welcome community contributions.

To understand what the gateway solves and how to deploy it, it helps to first understand OpAMP and the two implementation patterns it builds on.

The Open Agent Management Protocol (OpAMP) is a WebSocket-based protocol for remote management of agent fleets. It is not a telemetry protocol — it does not move traces, metrics, or logs. That is OTLP's job.

OpAMP handles the control plane: configuration delivery, health reporting, and lifecycle management.

This post covers all three layers:

- OpAMP Extension — in-process, read-only visibility

- OpAMP Supervisor — out-of-process, full lifecycle and config management

- OpAMP Gateway Extension (new, alpha) — relay and fan-in for segmented network topologies

OpAMP Gateway Extension: Now in Alpha

The OpAMP Gateway Extension is available today in alpha as part of the Bindplane Distro for OpenTelemetry Collector. It is ready for evaluation and testing. As an alpha release, configuration fields and behavior may change before general availability.

What it solves:

- Network isolation — agents in firewalled or segmented network environments can reach a local gateway rather than requiring a direct path to the OpAMP server

- Connection scaling — thousands of agent WebSockets are multiplexed into a small, configurable upstream pool, reducing server-side connection load

What it is:

- An OpenTelemetry Collector extension — not a separate binary

- Runs inside a collector at the network boundary

- Acts simultaneously as an OpAMP server (for downstream agents) and an OpAMP client (to the upstream OpAMP server)

- Delegates all authentication to the upstream server — no auth logic lives in the gateway itself

The rest of this post explains how OpAMP works, where the gateway fits, and how to configure a full end-to-end deployment.

Key design decisions:

- Transport: WebSocket (persistent, bidirectional) or HTTP polling

- Connection direction: the agent initiates the connection — no inbound firewall rules required

- Messages:

AgentToServer(status, health, effective config) andServerToAgent(config updates, commands) - Capabilities are negotiated on connect — agents declare what they support

In the direct model, each agent holds one WebSocket to the OpAMP server:

1# Minimal OpAMP client config

2extensions:

3 opamp:

4 server:

5 ws:

6 endpoint: wss://app.bindplane.com/v1/opamp

7 headers:

8 Authorization: Secret-Key ${env:OPAMP_SECRET_KEY}

9 capabilities:

10 reports_health: true # agent sends health status

11 reports_effective_config: true # agent reports running config

12 accepts_remote_config: true # server can push config changesThe Extension: Read-Only Visibility

The OpAMP Extension runs inside the collector process. It opens a WebSocket to the OpAMP server and reports the collector's health, description, and effective configuration. It cannot apply configuration changes.

Use it when: you want fleet visibility without remote config management.

1extensions:

2 opamp:

3 server:

4 ws:

5 endpoint: wss://bindplane.example.com/v1/opamp

6 headers:

7 Authorization: Secret-Key ${env:OPAMP_SECRET_KEY}

8 capabilities:

9 reports_health: true

10 reports_effective_config: true

11 # accepts_remote_config omitted — extension cannot apply it

12

13service:

14 extensions: [opamp]

15 pipelines:

16 logs:

17 receivers: [otlp]

18 exporters: [otlphttp]Limitation: if the collector crashes, the extension crashes with it. The server loses visibility exactly when it matters most.

The Supervisor: Full Fleet Control

The Supervisor is a separate binary that wraps the collector process. It owns the OpAMP connection and manages the collector's full lifecycle: starting it, applying config changes, restarting it, and reporting success or failure upstream.

What it adds over the extension:

- Receives config from the server and writes it to disk

- Restarts or reloads the collector after config changes

- Reports whether config was successfully applied

- Survives collector crashes and reports failure state upstream

- Can receive and apply binary package updates

1# supervisor.yaml

2server:

3 endpoint: wss://app.bindplane.com/v1/opamp

4 headers:

5 Authorization: Bearer ${env:OPAMP_SECRET_KEY}

6 tls:

7 insecure: false

8

9capabilities:

10 accepts_remote_config: true # receives config pushes from server

11 reports_remote_config: true # confirms config was applied

12 reports_health: true

13 reports_effective_config: true

14 accepts_packages: true # can receive binary updates

15 reports_packages: true

16

17agent:

18 executable: /usr/bin/otelcol # collector binary the supervisor manages

19

20storage:

21 directory: /var/lib/opamp # persists state across restartsThe supervisor injects its own OpAMP extension config into the collector at startup. You do not configure that connection manually.

Use the supervisor for any production fleet where you need remote config management and reliable health reporting.

The Connectivity Problem

Both the extension and supervisor share one assumption: the collector can reach the OpAMP server directly. That assumption breaks in:

- Network-segmented data centers — only designated egress points have outbound access

- Multi-VPC architectures — cross-segment traffic is restricted

- Regulated industries — firewall rules are compliance requirements, not preferences

A second problem exists at scale: N agents mean N server-side WebSockets. At large fleet sizes this becomes a server-side bottleneck.

The OpAMP Gateway Extension solves both.

The Gateway Extension: How It Works

The OpAMP Gateway is an OpenTelemetry Collector extension (opampgateway) — not a separate binary. It runs inside a collector at the network boundary, reachable by agents inside the segment, with outbound access to the OpAMP server.

1. Connection Fan-In

The gateway maintains a fixed pool of upstream WebSocket connections (connections, default: 10). Each new agent is assigned to the upstream connection with the fewest active agents (least-connections). The OpAMP server sees a handful of connections regardless of agent count.

2. Bidirectional Message Relay

The gateway forwards raw OpAMP messages without transformation:

AgentToServermessages are forwarded upstream on the gateway's connectionServerToAgentresponses are routed back to the correct agent via an internal agent-to-connection mapping

Agents have no awareness they are talking to a proxy. The server has no awareness of individual agent connections.

3. Authentication Delegation

The gateway contains no auth logic. Every connection decision is delegated to the upstream OpAMP server via a custom message handshake:

The gateway forwards the agent's HTTP headers and remote address upstream before upgrading the connection. If the upstream server does not respond within 30 seconds, the agent receives a 504.

Auth policy, credential rotation, and access control all live exclusively in the OpAMP server. The gateway enforces them automatically without reconfiguration.

4. Observability

The extension emits the following metrics, all tagged with a direction attribute (upstream or downstream):

OpAMP Server Requirements

While the OpAMP Gateway is supported in Bindplane, any OpAMP server can add support for the OpAMP Gateway. To support the gateway, an OpAMP server must handle two things correctly.

1. Support the Custom Message Handshake

The gateway uses two custom messages to delegate agent authentication upstream. The server must handler the connect message and send a connect result in response:

OpampGatewayConnect(type:connect) — sent by the gateway when an agent attempts to connect. Contains the agent's HTTP headers and remote address. The server must respond with a OpampGatewayConnectResult.OpampGatewayConnectResult(type:connectResult) — sent by the server to accept or reject the agent connection. Must include an HTTP status code and optionally headers. The gateway correlates responses to requests via a request_uid field.

A server that does not implement these message types cannot authenticate agents through the gateway.

2. Do Not Assume One Agent Per Connection

In a direct OpAMP deployment, each WebSocket connection maps to exactly one agent. Behind a gateway, a single upstream WebSocket connection carries messages from many agents. The server must route messages by InstanceUid from the protobuf payload — not by connection identity.

Any server-side logic that assumes one connection equals one agent will misroute messages or incorrectly attribute agent state.

Configuring the Gateway End-to-End

Layer 1 — Gateway Collector

1# gateway-collector-config.yaml

2extensions:

3 opampgateway:

4 server:

5 endpoint: wss://app.bindplane.com/v1/opamp

6 headers:

7 Authorization: "Secret-Key ${env:OPAMP_SECRET_KEY}"

8 connections: 3

9 listener:

10 endpoint: 0.0.0.0:4320

11 tls:

12 cert_file: /etc/otel/server.crt

13 key_file: /etc/otel/server.key

14

15# The same collector handles telemetry traffic (combined deployment pattern)

16receivers:

17 otlp:

18 protocols:

19 grpc:

20 endpoint: 0.0.0.0:4317

21

22exporters:

23 otlphttp:

24 endpoint: https://your-backend.example.com

25

26service:

27 extensions: [opampgateway]

28 pipelines:

29 traces:

30 receivers: [otlp]

31 exporters: [otlphttp]Combined deployment: the same collector instance can act as both an OTel Gateway (receiving OTLP from agents) and run the opampgateway extension. One deployment handles both telemetry and management traffic.

Layer 2 — Agent Side

Agents point their OpAMP endpoint at the gateway.

Option A — Example using Bindplane Distro of OpenTelemetry Collector (manager.yaml)

1# manager.yaml

2endpoint: ws://gateway-host:4320/v1/opamp

3secret_key: <secret-key>

4# optional: agent_id, labels, agent_name

5# for TLS: use wss:// and add tls_config (ca_file, insecure_skip_verify)Option B — Supervisor

Note: This option works with any distro that includes the OpAMP Gateway Extension.

1# supervisor_config.yaml

2server:

3 endpoint: "ws://gateway-host:4320/v1/opamp"

4 headers:

5 Authorization: "Secret-Key <secret-key>"

6

7capabilities:

8 accepts_remote_config: true

9 reports_remote_config: true

10 reports_health: true

11 reports_effective_config: true

12

13agent:

14 executable: /usr/bin/otelcol

15

16storage:

17 directory: /var/lib/opampOnly the endpoint changes from a direct config — everything else is identical.

Deployment Considerations

High availability: run multiple gateway collectors behind a load balancer. Agents connect to the load balancer address. Each gateway maintains its own upstream connection pool independently.

Tuning connections: The current default is 10. Scale up or down as needed. Fewer upstream connections may result in increased latency. Increase if upstream connection saturation appears in gateway metrics. The right number depends on agent count and message frequency

TLS: configure independently on each side. listener.tls secures agent-facing connections. The upstream connection uses the scheme in server.endpoint — wss:// for TLS, ws:// for plain. server.tls contains additional TLS configuration parameters.

Alpha status: the opampgateway extension is under active development in the Bindplane Distro for OpenTelemetry Collector repository. Configuration field names may change before general availability.

Conclusion

The OpAMP Gateway brings centralized fleet management to environments where direct agent-to-server connectivity was never an option. If you're evaluating the gateway, have questions about the configs in this post, or want to follow along as the extension develops toward general availability, join the Bindplane Slack community. Myself, and the entire Bindplane team is there and happy to help.